Extended Intelligences II: Co-Creative Music Agent¶

Project Overview¶

This project explores a distributed intelligence system where humans and AI collaborate to create music through physical interaction. The system interprets emotional and rhythmic inputs from sensors, processes them through an LLM agent, and generates lyrics, musical references, and light behavior. Despite technical constraints, the project demonstrates how imperfect sensing can still facilitate meaningful co-creative experiences between human and non-human intelligences.

System Logic¶

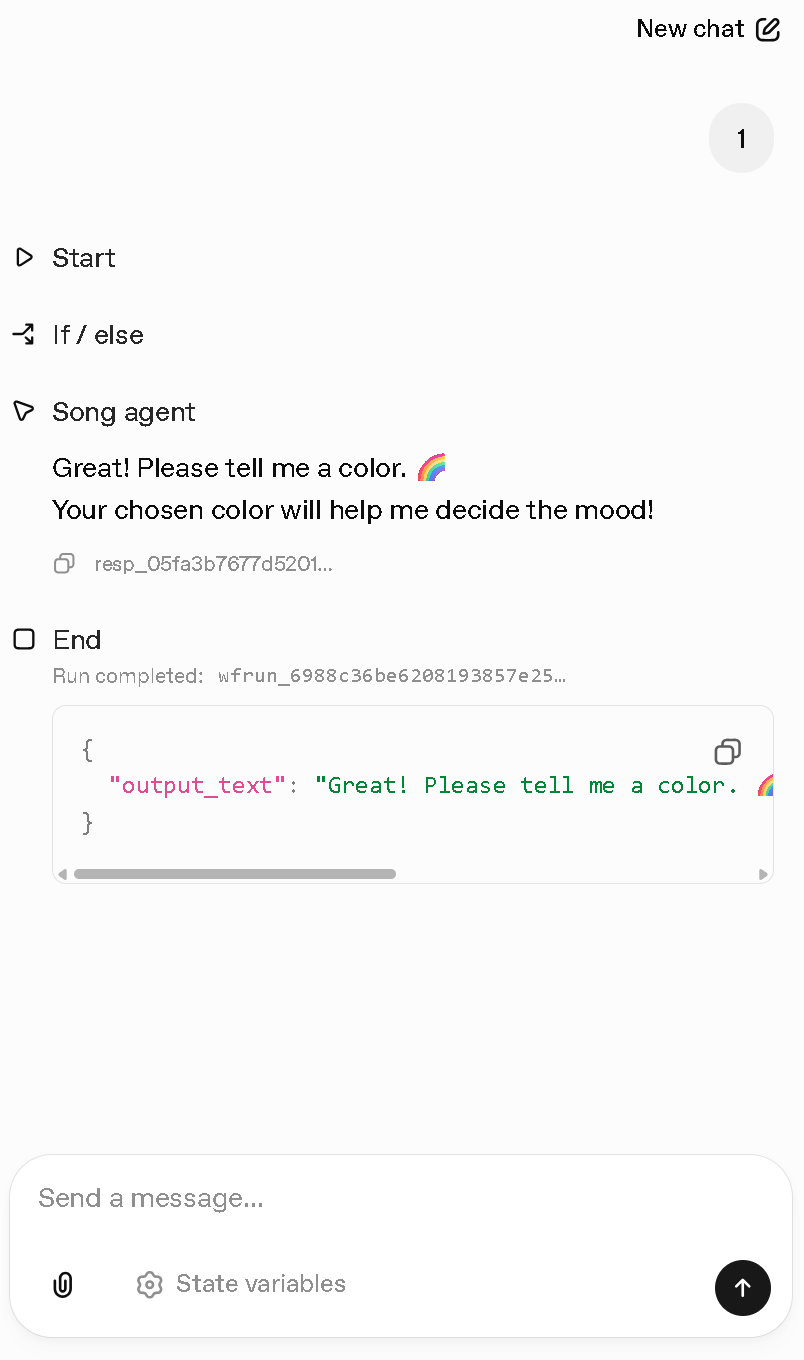

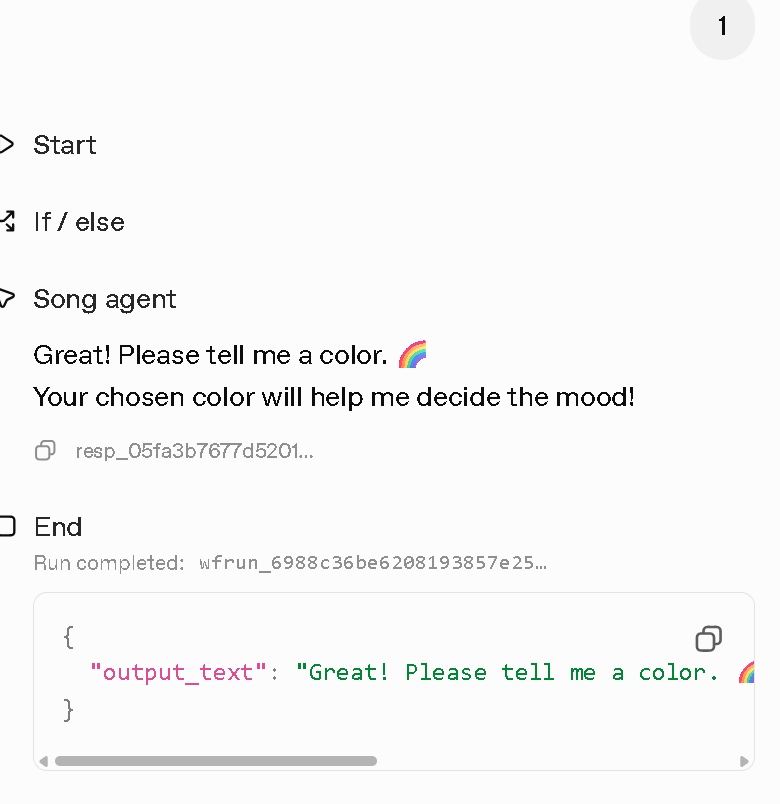

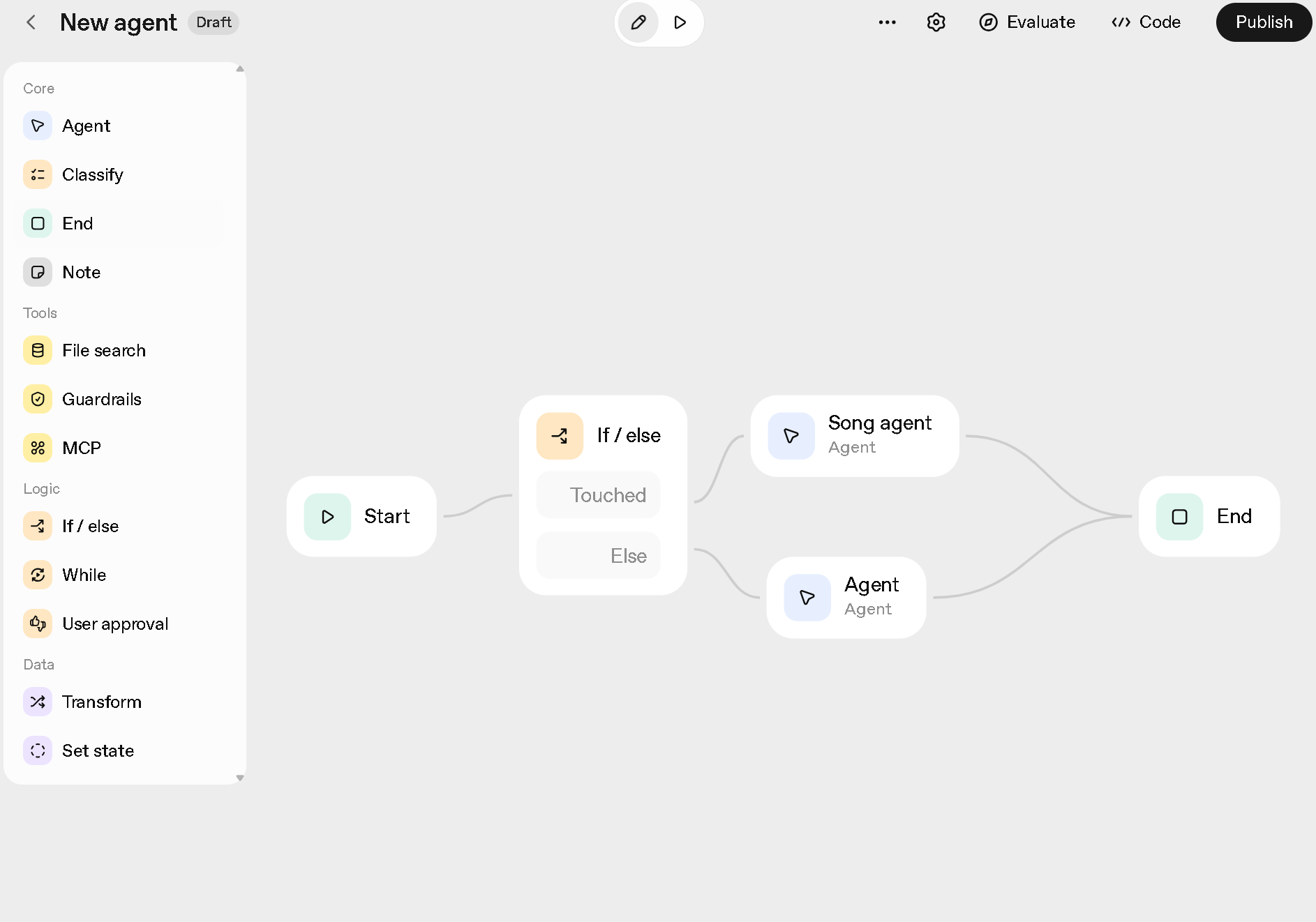

Interaction Pipeline:

- Physical Inputs: Touch (rhythm) + Color (mood)

- AI Interpretation: LLM maps inputs to emotional tone

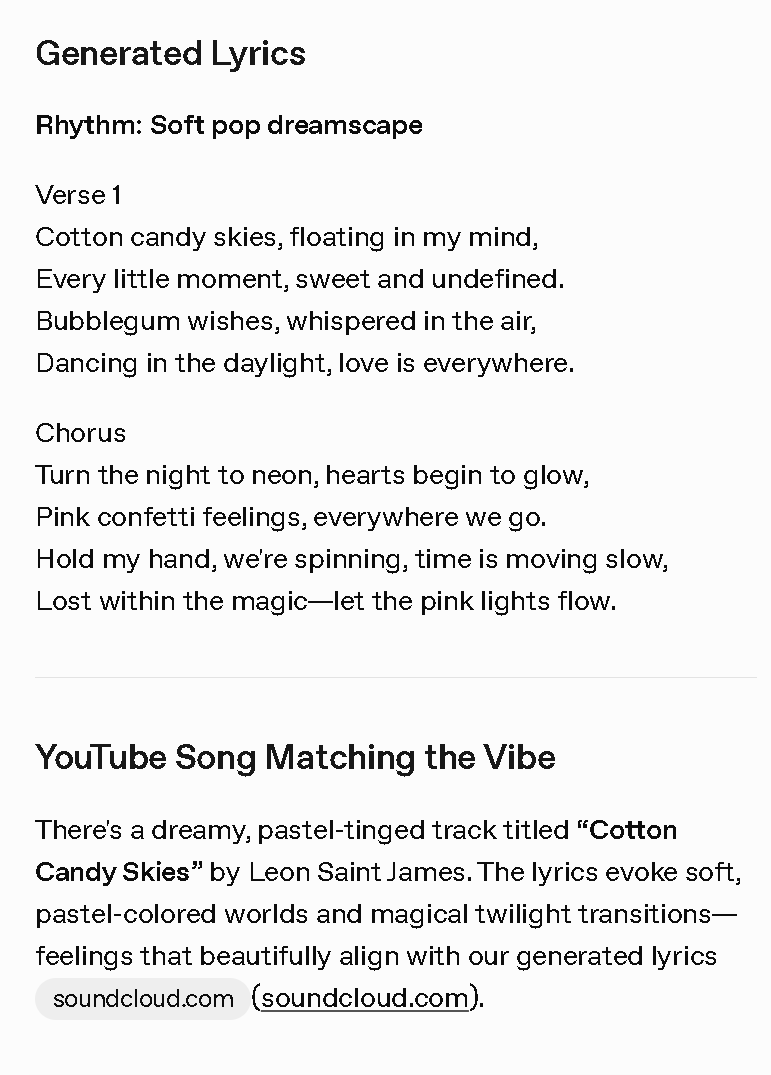

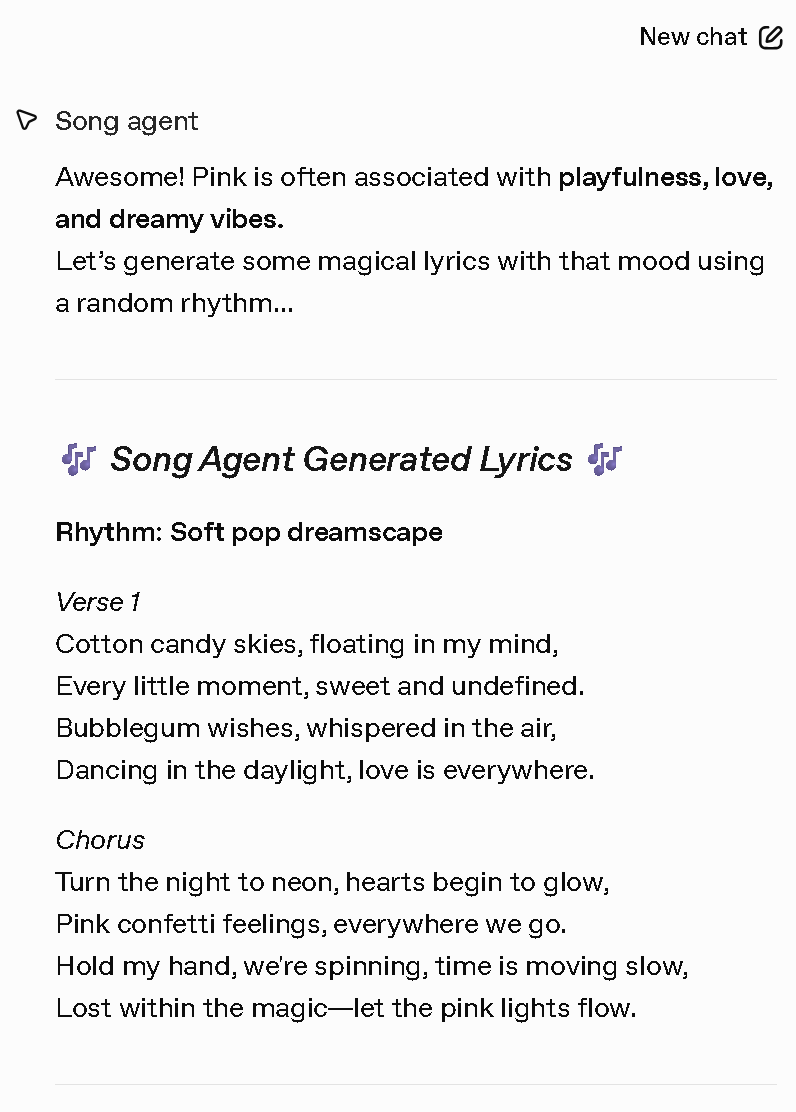

- Generative Output: Original lyrics + YouTube reference

- Physical Feedback: NeoPixel light patterns

- Closed Loop: Human responds to generated content

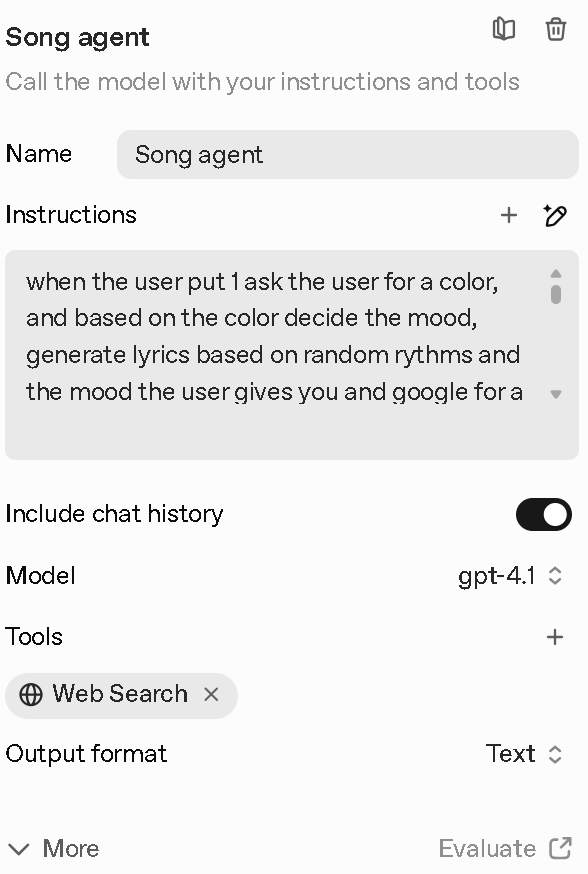

AI Prompting & Agent Behavior¶

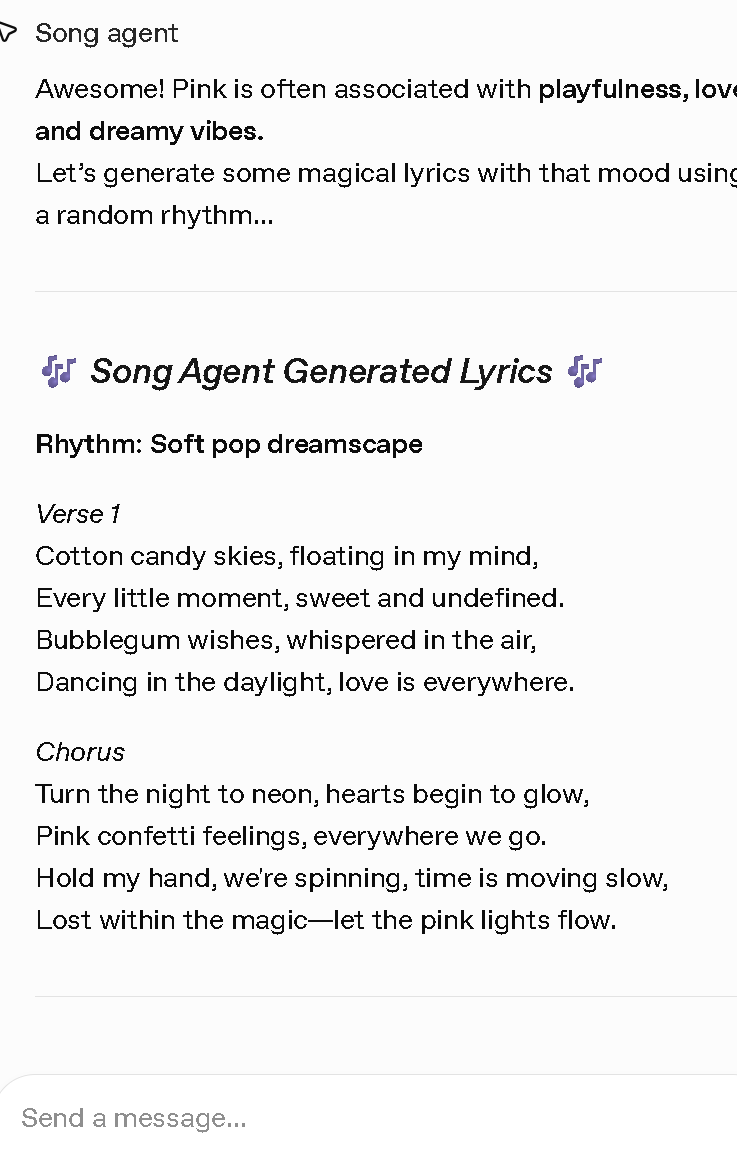

AI Agent Prompt Logic (Working Implementation):

When user provides color input: 1. Map color to mood spectrum: - Red → Passionate/Intense - Blue → Melancholic/Calm - Green → Hopeful/Growing - Yellow → Joyful/Energetic - Purple → Mysterious/Introspective 2. Generate lyrics incorporating: - Mood-derived emotional tone - Randomized rhythmic structure - Coherent thematic development 3. Search YouTube for matching song: - Similar emotional vibe - Compatible musical genre - Contemporary relevance 4. Return structured response: - Generated lyrics - Song recommendation with link - NeoPixel light pattern code

Agent Interactions

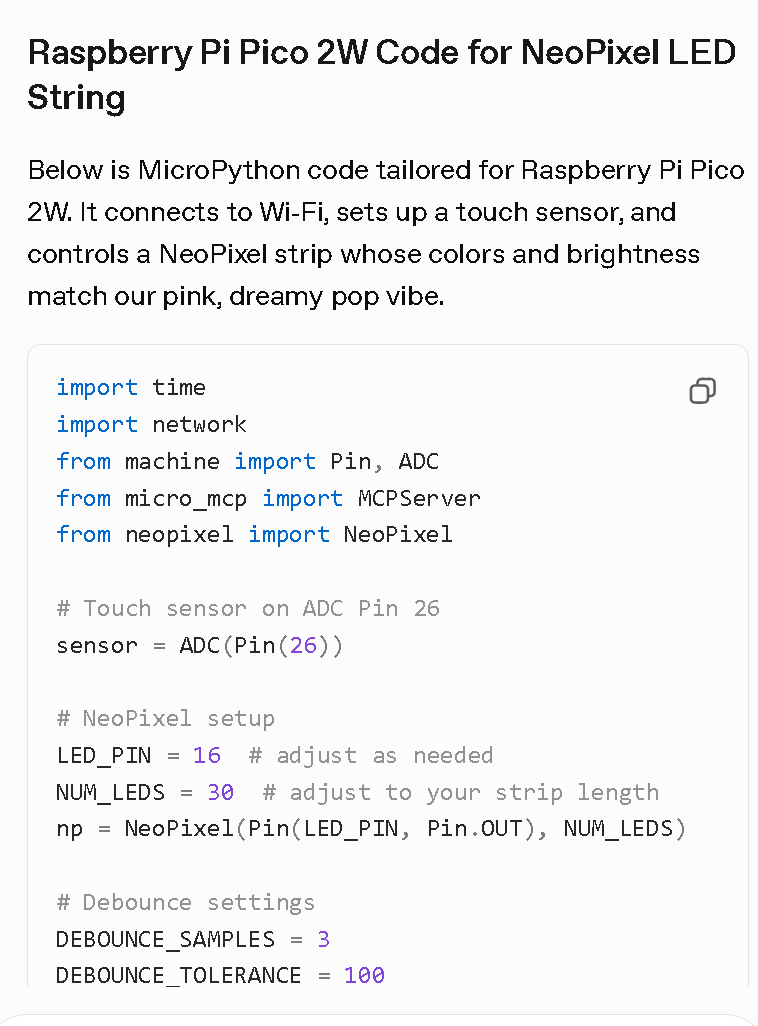

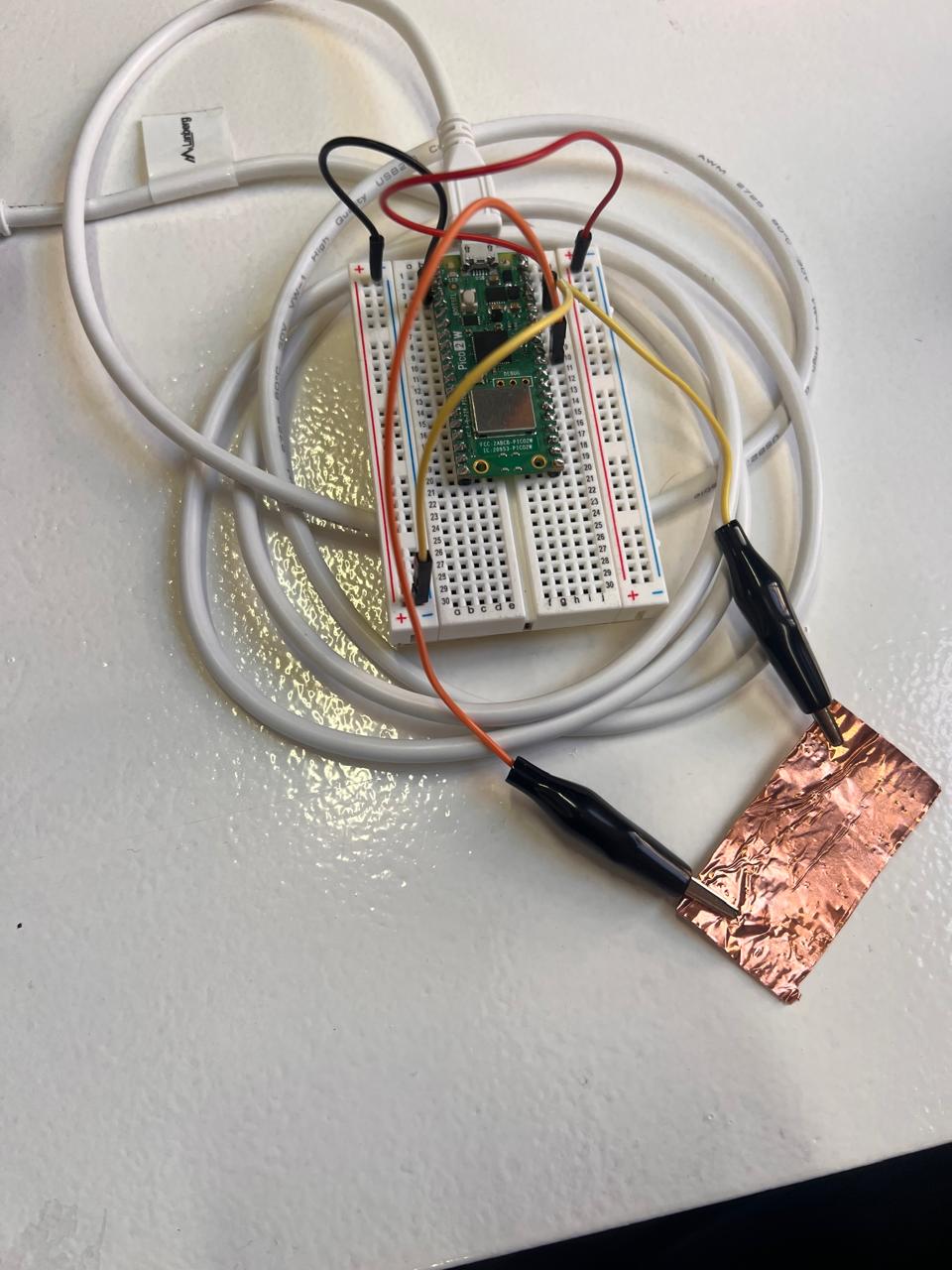

Physical Computing Setup¶

Hardware Components

- Raspberry Pi Pico 2W: Main controller with WiFi

- Capacitive Copper Strips: Rhythm detection (touch sensing)

- Color Sensor (TCS34725): Mood input detection

- NeoPixel LED Strip: Visual feedback system

- Breadboard & Wiring: Prototyping infrastructure

Working Raspberry Pi Code

import time

import network

from machine import Pin, ADC

from micro_mcp import MCPServer

# Touch sensor on ADC Pin 26

sensor = ADC(Pin(26))

# Debounce settings

DEBOUNCE_SAMPLES = 3

DEBOUNCE_TOLERANCE = 100

SAMPLE_INTERVAL = 0.05

def read_debounced():

stable_count = 0

last_stable = None

while stable_count < DEBOUNCE_SAMPLES:

value = sensor.read_u16()

if last_stable is None:

last_stable = value

stable_count = 1

elif abs(value - last_stable) <= DEBOUNCE_TOLERANCE:

stable_count += 1

else:

last_stable = value

stable_count = 1

time.sleep(SAMPLE_INTERVAL)

return last_stable

# WiFi connection

wlan = network.WLAN(network.STA_IF)

wlan.active(True)

wlan.connect("Iaac-Wifi", "EnterIaac22@")

# MCP server setup

mcp = MCPServer(name="music-agent", version="1.0.0")

@mcp.tool(

name="read_touch_sensor",

description="Read touch sensor state",

input_schema={"type": "object", "properties": {}}

)

def read_touch_sensor():

readings = []

for _ in range(5):

readings.append(sensor.read_u16())

time.sleep(0.2)

zeros = sum(1 for v in readings if v == 0)

return "Touched" if zeros >= 3 else "Not Touched"

mcp.run(port=8080)

Technical Constraints Encountered:

- Capacitive sensing limited to binary detection

- Rhythm detection required signal stabilization

- OS compatibility issues with real-time LED control

- Color sensor integration delayed by connectivity problems

- Limited timeframe for calibration and refinement

What Worked vs. What Didn't¶

✅ Successful Implementation

- MCP Architecture: Stable connection between physical inputs and AI agent

- Prompt Logic: Effective color-to-mood mapping and lyric generation

- Conceptual Pipeline: Clear flow from sensor → AI → output → feedback

- Agent Behavior: AI made coherent cross-domain decisions (emotion, rhythm, visuals, references)

- Distributed Intelligence: Demonstrated shared agency between human and AI

❌ Limitations & Challenges

- Sensor Resolution: Touch detection remained binary, not rhythmic

- Hardware Integration: NeoPixel and color sensor not fully operational

- Calibration Time: Signal stability required more development time

- OS Conflicts: Windows connectivity issues with Raspberry Pi

- Real-time Feedback: Light patterns not synchronized in final demo

Reflection & Learnings¶

This project began as an exploration of shared musical creation between human and AI, where physical interaction (touch and color) would become a language for co-creation. The idea was poetic: an AI that responds not just to words, but to the body's rhythm and the emotion of color.

In practice, the project quickly became a lesson about friction. Working within a very short timeframe and dealing with hardware limitations, operating system conflicts, and unreliable capacitive sensing meant that many parts of the physical system never fully behaved as expected. While the original intention was to create a more immediate and embodied interaction, the project revealed that even without real-time musical co-creation, it is still possible to meaningfully collaborate with an AI through a small set of inputs. This shift highlighted how artificial intelligence can act as a creative partner, interpreting minimal signals and expanding them into richer musical and atmospheric outcomes.

Due to the very limited timeframe of the course, much of the project required improvisation, and the system ultimately relied more on the LLM than originally intended. While this constrained the physical implementation, it also revealed how powerful it is to integrate an AI that can interpret ambiguous, emotional, and incomplete inputs, something that would be extremely difficult to achieve through deterministic code alone. With more time, the project could have pushed further into embodied interaction, but even in its partial form, it highlighted how rich and complex the communication between hardware and software can be, and how valuable this relationship is as a space for exploration and learning.